Model APIs on Blaxel have a unique inference endpoint which can be used by external consumers and agents to request an inference execution. Inference requests are then routed on the Global Agentics Network based on the deployment policies associated with your model API.Documentation Index

Fetch the complete documentation index at: https://docs.blaxel.ai/llms.txt

Use this file to discover all available pages before exploring further.

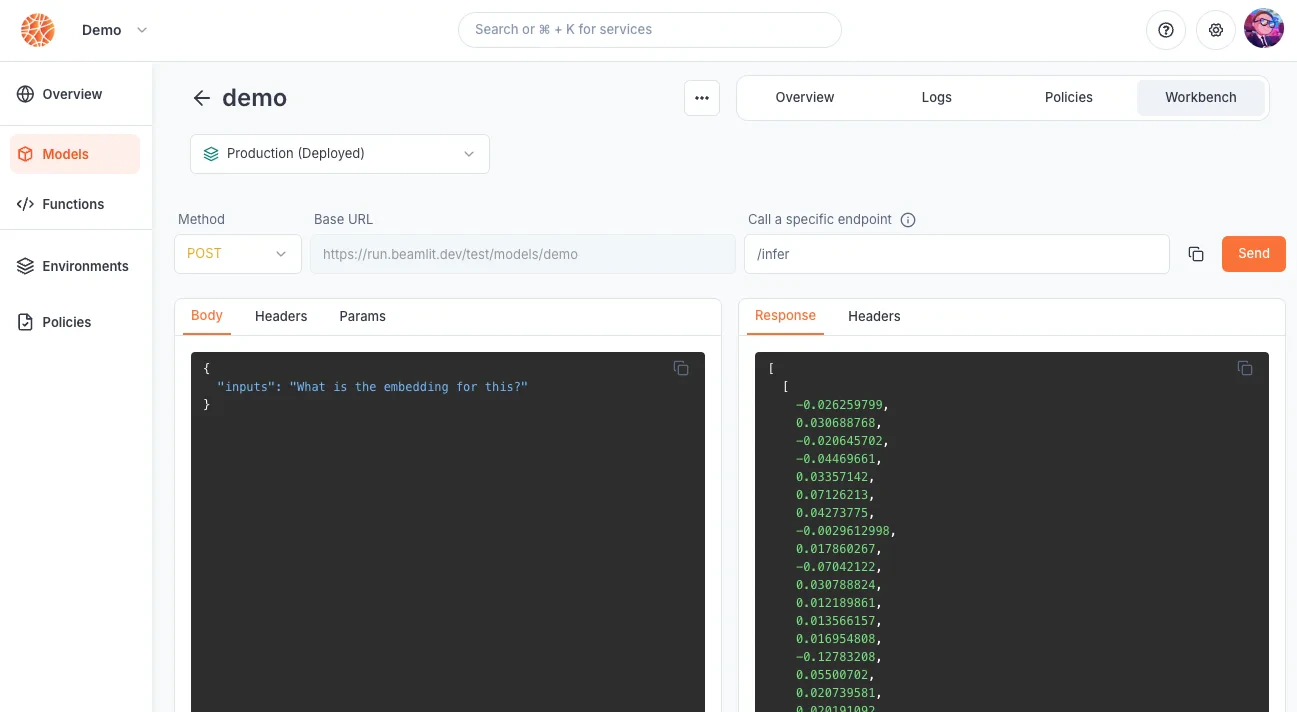

Inference endpoints

Whenever you deploy a model API on Blaxel, an inference endpoint is generated on Global Agentics Network. The inference URL looks like this:Query model API

Specific API endpoints in your model

The URL above calls your model and can be called directly. However your model may implement additional endpoints. These sub-endpoints will be hosted on this URL. For example, if you are calling a text generation model that also implements the ChatCompletions API:- calling

run.blaxel.ai/your-workspace/models/your-model(the base endpoint) will generate text based on a prompt - calling

run.blaxel.ai/your-workspace/models/your-model/v1/chat/completions(the ChatCompletions API implementation) will generate response based on a list of messages

Endpoint authentication

It is necessary to authenticate all inference requests, via a bearer token. The evaluation of authentication/authorization for inference requests is managed by the Global Agentics Network based on the access given in your workspace.Making a workload publicly available is not yet available. Please contact us at support@blaxel.ai if this is something that you need today.

Make an inference request

Blaxel API

Make a POST request to the inference endpoint for the model API you are requesting, making sure to fill in the authentication token:Blaxel CLI

The following command will make a default POST request to the model API.--path :

Blaxel console

Inference requests can be made from the Blaxel console from the model API’s Playground page.